In part 1, I listed six scenarios that could potentially cause ExecuteSQL code to break:

…and managed to get through the first five before running out of steam. This time, we’re going to examine #6 on the list, and then look at a few miscellaneous odds ’n’ ends, and today’s demo file is ExecuteSQL Sandbox, v2, if you’d like to follow along.

Changing a Field Type

Why would changing a field’s type cause a problem? Well, first off, as I mentioned last month, if you are using that particular field as a predicate in a JOIN, the query will break, and your reward will be the the dreaded “?”.

I don’t have a fix for this, just some advice: Don’t change a field’s type if you’ve used it as a JOIN predicate in a working SQL statement. (The only reason I’ve needed to change a field type recently was to fix a broken JOIN where the field types were mismatched.)

But let’s suppose you haven’t used a particular field as a JOIN predicate, is it then safe to change its type? Yes, if you take some precautions. The first precaution you can take is to construct your queries using dynamic parameters whenever possible, because then you don’t have to worry about the underlying field type.

(If that isn’t 100% clear, it was discussed in detail in the “Numbers” section of FM 12 ExecuteSQL: Dynamic Parameters, pt 1.)

But, as we saw in FM 12 ExecuteSQL: Dynamic Parameters, pt 2, if you are constructing an IN argument “on the fly” (creating it at runtime), then you will most likely want to go the standard route. Here’s an example of a query using an IN operator:

ExecuteSQL(

" SELECT MAX ( sales )

FROM customers

WHERE state IN ('WA','OR','ID') "

; "" ; ""

)

Since “state” is a text field, each IN element has been wrapped in single quotes. That’s straight-forward, and no one’s likely to change that field type from text to number.

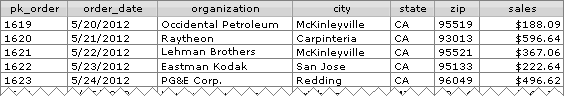

But what about the pk_order field in today’s demo file?

It looks like a number, but it could be a text field… which means if we decide to reference that field as part of an IN clause, it’s not immediately clear whether we should do this:

WHERE pk_order IN ( '110','230','1479' )

or this:

WHERE pk_order IN ( 110,230,1479 )

…is it? Now it happens that pk_order is a text field, so therefore the first version is correct, and let’s suppose that you’ve used that version in some mission-critical routine. Well, you know what’s going to happen if you change pk_order to a number field, don’t you? Your mission-critical routine goes up in smoke, along with your reputation as a careful developer.

What we need is a custom function to correctly format the IN arguments based on the type of the referenced field, like this:

In case it isn’t clear, the only difference between the two entries is that the first references a text field and the second references a number field. And if you’re wondering about the double quotes around the field name, that was explained in part 1.

Here’s the CF definition:

And here’s how the custom function might be used in a query.

Or, since the original point of this was to facilitate “on-the-fly” IN arguments:

On a related note, I can see that it’s time to upgrade the FoundSetToSQL custom function that I introduced last year (Custom Functions for Internal SQL). Here’s what I had to say about it at the time:

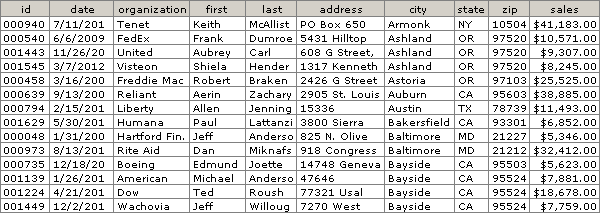

FoundSetToSQL – the concept of a found set does not exist in SQL, but sometimes we need a SQL command to operate only on the records in our current found set. What to do? Well, it turns out that we can say, “Hey, SQL, only process records if the value in a certain field matches one of the values in this list: a,b,c,d,e,…”, and we can build that list from values in the current FileMaker found set. Take for example this found set of 14 records:

FoundSetToSQL ( customers::id ) will return the following…

'000940','000540','001443','001545','000458','000639',

'000794','001629','000048','000973','000735','001139',

'001224','001449'

…as one unbroken string of text. And this can be used by the SQL “IN” operator as part of a WHERE clause, as per this statement, which generates a list of unique cities in the found set.

Warning: FoundSetToSQL is a) not fast with large found sets, and b) subject to the standard 10,000 iteration recursion limit… you don’t want to point it at a found set with more than 10,000 records.

At the time, I either was unaware of, or conveniently chose to ignore, the “underlying field type” issue. This has been remedied in today’s demo… the CF now correctly detects the field type and formats the IN arguments accordingly. I by no means consider this CF to be the last word on the subject, and welcome any suggestions for improvement.

Other Observations

Today’s demo includes a basic set of tables logically related like so:

ORDERS ---< LINEITEMS >--- PRODUCTS

…but of course, since this is SQL we’re talking about, I was quite proud of the fact that they weren’t actually linked on my Relationships Graph:

When I decided to define orders::sales like so, there were two problems. First the error message…

Unfortunately, FileMaker doesn’t want you to indirectly use GetFieldName from inside a custom function, in this case GFN and GTN, in a calculated field definition to point to an unrelated field. But where there’s a will there’s a way, so I temporarily linked orders to line_items, like so…

…which allowed me to finish defining orders::sales. Then I went back into the Relationships Graph, broke the link between orders and line_items, and guess what? The calculation for orders::sales continued to work, and I was pretty pleased with myself. Unfortunately, I soon discovered a second problem: performance. For example, running standard FileMaker searches on orders::sales just felt unacceptably slow. So I a) re-linked orders and line_items on the Relationships Graph, and then b) redefined orders::sales as…

…and no more sluggishness. Lesson: use SQL where it makes sense to use it, but don’t necessarily expect it to outperform native FileMaker functionality.

The demo also contains some examples using the LIKE operator, with its two wildcards, _ for a single character and % for multiple characters.

Note in the second example that by invoking LOWER on the source field, the LIKE criteria effectively becomes case-insensitive.

Also, I knew there was a product that had “Telegraphe” in its name…

…and I wanted to generate a list of customers who had purchased it, so this gave me an opportunity to not only use LIKE, but the JOIN operator as well. To make the JOIN example as readable as possible I ignored robust coding practices.

Here’s a robustly coded version:

Finally, the demo contains some examples of concatenation…

…and a few other entries for the reader to explore if he or she is so inclined.

Your concatenation examples raise the issue of type, again. What happens when you try

SELECT city + ‘ ‘ + state + ‘ ‘ + zip

FROM orders

WHERE sales = ?

And “zip” is defined as a number field? Or if you were concatenating a mix of numbers, text, and dates? Seems like it’s time for a series on SQL functions and operators…

Isn’t defining a zip code field as a number a felony in New England?

I agree about the series on SQL functions and operators. Would you be interested in writing a guest article on the subject?

Obviously you know this Corn, but I appreciate you asking provocative questions: one trick in that case would be to use the CAST function… e.g., given a “zip_number” field, this would work:

I think you provide a much better narrative than I could. But I’d be happy to collaborate on one with you.

Thanks Corn. I’ll be in touch soon.

The temporary linking of tables so that you can use the GFN function strikes me as one of those stupid FileMaker tricks! :( (this, of course, is not your fault).

So if you go back and edit the calculation at a later date, it will fail unless you first re-link the tables.

An obvious use of ExecuteSQL is to pull values in an unrelated preference table from all over your solution (which I’m doing a lot). In that case, I guess one has to either put up with the stupid tricks or just hard code (and put up with the reference not appearing in the DDR).

Oh well.

Rob

To be more accurate, the temporary linking is only necessary if you use a custom function to extract table/column names. If you use GetFieldName() directly, FMP is smart enough to know not to worry about the lack of a relationship. It does not extend the same courtesy to custom functions.

good spotting, Corn.

Thanks

Hi Kevin, great article as always! I wanted to know if you found any performance issues using your wildcard with LIKE operator? I’m trying to construct a query using LIKE for both the % and _ wildcard operators but it is painfully slow. What I seem to be observing is FMS sending me the entire record set from the table and then having client perform the query – which is insane! Any tips for LIKE/wildcards – should they be avoided and should I be looking for other ways like through exploded keys or just scripting a find mode?

Hi Daniel,

I confess that I have not researched this very deeply. Perhaps someone with a better answer will chime in.

Regards

Kevin

Daniel, that’s true of any query. With a hefty cache on the client, the performance of subsequent queries is typically faster than the initial one but sometimes your local cache will be invalidated and you’ll have to fetch the records all over again.

LIKE is going to be slow in general. Sometimes you have no choice, but if there is any way to not use LIKE I would suggest taking it.

I am holding out hope for server-side SQL, but it’s a very tricky problem. Consider that an SQL expression in your file might reference data from files residing on different servers. The client is the only place that makes sense for evaluating that expression. As a pseudo-optimization, however, it would be great if FMP could determine whether or not a query can be performed entirely on server, and if so delegate execution to it. This is not unlike performing a Find where the criteria span tables that exist on separate servers.

Cheers,

-corn

Thanks for that explanation Corn. In the end I’ve gone with a form of locator key field on the child side and using a name given & family field on parent side with some keys – basically using a relationship – end result is its almost instant and solves the only real need they had to use a wildcard.

On the query however, are you including relationship/find queries? Those two perform the query server-side as far as I’m aware, hence why they are so damn quick, what they return may just be internal record IDs which client-side then uses to fetch the resulting data, be it all if sorted or using aggregate, or first 25 or whatever it is otherwise. Relationship or find are definitely miles ahead of LIKE or even executeSQL = in terms of performance, and in both of those you are free to use values from the current file or other files pulled through into a calculation.

thanks again.