Disclaimer: This article contains speculative and experimental techniques that are in the proof-of-concept stage. Use at your own risk and test thoroughly.

Earlier this month I had the honor and the privilege to do a presentation on the topic of Radical Separation at the PauseOnError un-conference in Portland, Oregon, which included a demo file resembling this one: virtual-calcs-part-1-v2

Before the conference I posted a pseudo-F.A.Q. which included the following…

- Q. What’s your experience with the Separation Model?

A. I’ve used it heavily over the last seven years, for a variety of vertical market applications, custom projects and, recently, on a vertical market FMGo app. - Q. I’m curious about the Separation Model, but I’ve never used it. Will this session be too advanced for me?

A. Not at all, just do some homework first. E.g., there has been detailed discussion of it on FM Forums (http://fmforums.com/search/?&q=separation%20model). - Q. Will this session include a debate of the pros vs. cons of using the Separation Model?

A. I sure hope not — these have already been discussed ad nauseam in countless online venues, including the one cited above.

Overview

Today’s article is a recap of the main portion of my presentation, but includes major improvements in the realm of robustness, so if you downloaded an earlier demo, please use today’s demo instead.

I started out the presentation by drawing a distinction between “Baseline” and “Radical” separation.

Obviously, in the real world, there are many ways this could play out, but for the purpose of this discussion, Baseline refers to a “textbook” separation scenario with two files, the first being Interface (a.k.a. “UI”) and the other being Data. You (the developer) have “ownership” of the former and the client has “ownership” of the latter… however, you have the ability to make post-deployment schema changes to the installed Data file as necessary, client ownership of same notwithstanding.

This last bit is the crucial difference between Baseline and Radical separation, because with Radical separation you will (in theory, anyway) never again have access to the Data file once it’s been deployed. An example of this might be a separated shrink-wrapped FileMaker solution, or an offline separated FileMaker Go solution. Just as in Baseline separation, you will deliver upgrades by swapping in a new UI file… but unlike Baseline separation, if you need to define a new calculated field in the Data file, you will not be able to do so in the traditional manner. However, you might be able to do so virtually, via the UI file. More on this in a minute, but first a bit more about…

Baseline Separation

In almost any separation scenario, you will keep a copy (often with test data only) of the Data file, so you can make improvements to the solution, carefully tracking any schema changes to your copy of the Data file, and then replicating those changes precisely in the client’s copy of the Data file.

Since you “own” the UI file, you don’t need to worry about tracking schema changes to that file, because when it comes time to deliver an upgrade to the client, the client’s copy of the UI file will be taken offline, and replaced with your new improved version.

With Baseline separation some developers pre-define a chunk of “reserved” fields in each table of the Data file before initial deployment. These reserved fields can simply be globals or unstored calculations… they will be repurposed at some point in the future.

The reason for doing this is to maintain internal id parity between fields in the your copy of the Data file and the client’s copy of the Data file. If that sounds like gibberish, bear in mind that FileMaker assigns an internal ID to every object in the database, including fields, which is why you can typically rename a field with impunity. And the reason you might care about this is that, at some point, unbeknownst to you, the client may choose to add some additional fields of their own to the Data file (after all, they own it).

Meanwhile you are busy adding new functionality to their solution, and let’s say this involves defining a new field in your copy of the Data file. And let’s further say that the internal ID of this field is 222. And then you place this field on a layout in the UI file.

But the client has already added, say, three new fields to the same table in their Data file which you know nothing about. So you a) log on to the client’s system remotely, b) define the new field in their copy of the Data file (which turns out to have internal ID 225), and then c) provide them with a nice new UI file update, with field 222 on a layout as mentioned above and guess what?

They don’t see what you intended them to see, because field 222 in your copy of the Data file is different than field 222 in theirs. Reserved fields are a great way to mitigate this problem. As long as you use the same reserved field in your copy of the Data file and the client’s Data file, this problem will not occur.

I typically use a simple numerical sequence rather than embedding the actual internal id in the field name; once I’ve got a block of reserved fields defined, I’ll likely want to paste it into another table (where the ids would be different). But if you’re wondering how to determine an internal id… one way would be to utilize Fabrice Nordmann’s FM_Name_ID custom function, which you can read about here: Avoiding Brittleness.

Okay, enough about Baseline separation. Let’s turn our attention now to…

Radical Separation

As mentioned previously, Baseline and Radical separation share a common assumption about the UI file, but a very different assumption about the Data file, because under the latter scenario, once the Data file has been deployed, you (the developer) will no longer have access to that file. This means that if you think you will ever need to “define” a new field in the Data file — and I enclose the word in quotation marks because obviously you won’t actually be able to do so — you will need to plan carefully before deployment.

First let’s talk about standard (non-calculated) fields. There’s nothing to stop you from predefining any number of reserved fields in each table, and you might as well create regular fields as well as globals, for example:

How many of each type? That’s tricky, because the answer is: “More than you’ll ever need.” Here I just created a few to make the point. Clearly this is more complicated than the Baseline scenario, but we’re trying to anticipate what we might need in the future. The field name doesn’t matter… if I need a number field, I’ll place zzRes_Number_01 onto a layout in my UI file and give it a user-friendly label, like “Opening Balance”, which is how users will think of it from now on… they will likely never know or need to know the true name of the field.

But what about calculated fields? Can we set aside a block of calculated fields before deployment, and then provide the definitions at some later date via a UI file update? Yes we can, and I’d like to thank Will Porter of Polytrope, for clueing me in to this possibility many years ago. In a nutshell, you define each calculation as Evalulate <syntax>, where <syntax> represents the calculation definition, and provide a mechanism to deliver this payload to the proper destination (i.e., the pre-defined calc field in the Data file) via the UI file. I refer to calculation fields configured in this manner as “virtual calcs”.

At this point, I’d like to share a couple quotations:

Solutions should be as simple as possible, but no simpler.

—Albert Einstein (attributed)

For every complex problem, there is a solution that is clear, simple and wrong.

—H. L. Mencken

In other words, we want an elegant solution, but not at the expense of robustness.

One thing I decided early on was that I wanted to be able to use the Data Viewer in the Data file, to set up and debug my definition syntax, and then store each definition as a record in a Virtual Calculation table in the UI file. (My thinking on this is evolving, but I’ll save that for a future posting.)

The advantages of the VC table are a) clarity, and b) ease of deployment. The code in the syntax field will be evaluated as written… no need to escape or transform it in any way. So how do we get from a) the VC table, to b) functioning calculations in the Data table? I tried and ruled out a number of different approaches, and I think it’s worth mentioning them here, and also saying why they were ruled out.

Note that the ability of a field to know its own name, thanks to GetFieldName (Self), can make the implementation of any of these approaches less tedious than it otherwise would be. [For more re: field name self-awareness see GetFieldName: New in FM 10, Improved in FM 11.]

I might also mention that if one is willing to accept the idea of a Virtual Calc table as a valid starting point, then the next thing to determine is whether you are going to “pull” or “push” the definitions.

Discarded Approach #1: In the interest of having as few moving parts as possible in the solution, the idea of pulling each definition directly from the VC table and into the desired virtual calc field in the Data file was quite appealing, and ExecuteSQL seemed a logical way to accomplish this.

Here’s a portion of the VC table…

…and here’s the virtual calc definition (note that while the calc result type will vary, the calcs themelves can all have the same definition thanks to GetFieldName):

The purpose of this calculated container field was to display a check mark when certain logical criteria were met. And at first it seemed to work perfectly. But over time as I navigated around, I noticed that check marks that should have been present… weren’t. In other words, the calculation would stop working for no apparent reason.

All the remaining approaches, including the one I finally settled on, involve walking the records in the VC table, and pushing the syntax out to some location (e.g., a global field or a global variable) that can be read by the expectantly waiting virtual calculation field.

Discarded Approach #2: Declare a $$variable in the Data file corresponding to each entry in the VC table. E.g., for the field Donations::zzVC_Number_01, create a variable called $$Donations.zzVC_Number_01 (we can’t have a “::” in a variable name, so we’ll use a “.” instead), and then define the virtual calc like so:

This approach was quickly discarded after I found some of my virtual calcs working fine one minute and then displaying “?” the next.

Discarded Approach #3: For each virtual calc field, create a corresponding global text field in the same table to hold the definition, e.g., a calculation field, zzVC_Number_01, would be defined as:

Evaluate ( zzVC_Number_01_Definition ; z_ModTimestamp )

…where the field being evaluated is a global text field containing the definition syntax.

This approach actually worked quite well, but was discarded because it seemed unweildy to have to create a separate “_definition” field for each virtual calc field. However, in terms of robustness I had no complaints, so would it be possible to leverage this technique into one that didn’t require individual, dedicated “_definition” fields?

The Approach: If you haven’t already done so, now might be a good time to download the demo file mentioned at the beginning of the article. And note that in the demo, the UI file is named “VC Interface” and the Data file is named “VC Tables”.

With the preceding approach appearing to meet the robustness criteria, I wondered whether it would be possible to consolidate all those “_definition” fields into a single global repeating field per table. So, in each table I defined an identically-named global text field with 32,000 repetitions (i.e., the maxium number of reps possible), like so:

…with the idea that the UI file at startup could walk the Virtual Calc table, and push the definition syntax from each record into a specific rep of the above field. How would it know which rep to push to, and for that matter, how would the corresponding virtual calculation field in the Data file know which rep to evalulate?

Let’s start with the “push” process from the Virtual Calc table in the UI file. As you can see in the demo, we store the table name, field name, and definition syntax in this table, and we can use the numeric portion of the field name to determine the corresponding target repetition in zzVirtualCalcDefinitions.

So, we’re going to walk these records at startup and push the definitions in the syntax field into the waiting global repeater, zzVirtualCalcDefinitions, in either the Donations or the Donors table, via this script:

After this script runs, we should be able to use the Data Viewer to confirm that the target reps in the Data file have been properly loaded, e.g., the embedded 00013 here in the VC table…

…should correspond to rep 13 of zzVirtualCalcDefinitions in Donors. Does it?

Indeed it does. So much for pushing from the VC table… what do the virtual calcs look like over in the Data file? The calc syntax is identical for all of them, but of course the result type varies accordingly.

The major difference between this method and the one I showed a few weeks ago in Portland, is that now I am using the field name to derive the repetition number, whereas the method I showed in Portland used the internal field id to derive it. While visually the results were identical, I discovered there were intermittent sorting and searching failures with the former method, whereas so far the current method has been rock solid.

Incidentally, in case it isn’t completely obvious, I don’t view anything written here to be the final word on the subject. I hope this article is the beginning of a conversation, and it may well be that I was too hasty in ruling out one of the “discarded” approaches above, missing out on some crucial detail that would have made it viable (i.e., reliable), in which case I trust someone will point out the error of my ways.

One unexpected “gotcha” I discovered with this method — and, on reflection, it shouldn’t have been unexpected after all — occurred with this virtual calculation definition I created to generate what I like to think of as a “combo name” in Donors:

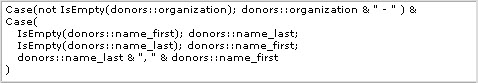

It worked as expected… in Donors… but when I placed a related version of the calc field onto the Donations layout, it defiantly and resolutely refused to display anything at all. Thank you to Jeremiah Small for pointing out the reason: I had neglected to “fully qualify” my field names (i.e., tableOccurrence::field) so FileMaker couldn’t resolve the virtual calc from the context of Donations. I changed the virtual calc definition to…

…and, voila, problem solved.

If I had followed my own prescription, and defined the virtual calc definition in the Data Viewer before pasting it in, the problem never would have happened in the first place (since the Data Viewer forces you to fully qualify all field names). But this does point out a subtle distinction between virtual calcs and geniune FileMaker calculations, because unqualified field names would not have caused this problem in a normal FileMaker calculation.

Conclusion

As I mentioned at the outset, this is the central portion of the material I presented at PauseOnError earlier this month. After the presentation, I was approached by Barry Isakson of Architectronica, with some ideas to a) reduce the field count, b) solve the “define more fields than you’ll ever need” problem, c) accommodate summary fields (which I conveniently avoided mentioning today), and d) make the process less opaque to DDR analysis tools such as BaseElements and Inspector.

We’ll explore these possibilities in upcoming installments of this series.

hi kevin,

again, a great article and, as always, a lot to learn from you!

thank you for your work!

p.

I appreciate the kind words, Pixi!

Although I wish this type of functionality were built into FM, your maturing “Proof-of-Concept” is intriguing and compelling.

Thank you, Kevin — for all of the hard work you do sharing FileMaker knowledge to the public. It was my pleasure to meet you face-to-face in Portland at PoE.

– – Scott

And the pleasure was mutual.

Wow… that got my brain working…

That is a really good insight into something I have been thinking about… but it has been wandering around my grey matter in a confused loop for years.

Suddenly some clarity…

Thanks Kevin

Thank you Lyndsay.

Interesting. In your very last example, you probably would not need two versions of the calc if you always use a fully qualified field name.

You hit the nail squarely on the head Jeremiah. Article and demo file have been revised accordingly. Thanks so much.

Hi Kevin,

Thanks a lot for the time and effort put into it.

It is one of the most desirable thing that every developer wanted to do. However, often running “short of time” (excuses for the complexity involved) to resolve this issue.

If I didn’t remember wrongly, I came across an article introducing the term, Logic Separation (LS), which has a similar idea. However, I guess as the idea was mentioned together with Data Separation (DS) and DS had attract much attention by developers that LS was forgotten.

Again, if I didn’t remember wrongly, the idea of LS was first introduced after either FM7 or FM8. A true DS at that time was already a great challenge, however it is seemingly more and more feasible with the later versions of FM. And, I believe LS will subsequently be possible. That is, the Data File will store only Raw Data (only input fields, no calculation and summary fields).

Then, the reserved fields created before delivered to clients would be for the future raw data. All calculation and summary fields store in a LS file. For a start, may consider storing all LS fields in UI file (so developer can edit them if needed). Ideally, LS fields should be store in separate file so that developer has the choice to open LS fields and/or UI for certain clients who want to do some simple editing.

At this point of writing, I am only thinking of Field Definition (Calculation and Summary where Execute SQL could help). However, other FM elements are also needed to be considered in the modelling. Hopefully, some nice guy can think of a good solution and share it. Thanks!

Regards,

Omega Goh

I found this article very intriguing, thanks for putting it out there for us to see. I remember playing a bit with similar concepts a bit with Filemaker 8, but didn’t take it nearly this far!

One thing did occur to me while reading this. Your calculated fields would have to be either unstored, or else stored and automatically update when some trigger field is modified. If your table gets wide and you have a fair number of calc fields evaluating at record commit time, then your commit time is going to balloon, in proportion to the number of calcs and the complexity of evaluating each one. Remember that the calc engine will have to instantiate itself with each one. While a desktop client might see a pause of a second or so, an iPad or iPhone will see a bigger performance hit. I mention this because we initially deployed an auditing solution that created audit entries in an audit field at commit time, and eventually discarded it for exactly that reason. Once we were auditing a fair number of fields, the calcs at commit time slowed it unacceptably.

There’s also the part of the equation where Filemaker Server maintains state information, including the contents of global fields, but not global variables. So while global fields are slower than global variables to read and write, if you involve global fields in calculations, Filemaker Server may be able to help out a bit in the processing, depending of course on what you are doing. Remember that any calc involving a record search, will (wherever it can) offload the search itself to the server side if the server has the state information to do so.

All in all, some very interesting ideas, and I look forward to seeing where you take them!

Peter

Thanks Peter… I appreciate your thoughtful comments and you taking the time to write them up.

In this technique, I’m not creating or modifying records at commit time, the way one would when generating an audit trail.

The calculation fields are unstored in this process. I don’t believe that there will be an unacceptable performance hit at record commit time, unless you have a huge number of these fields visible on a given layout or something else that forces the virtual calc fields to evaluate.