Update 29 June 2018: see Virtual List Reporting with JSON Arrays for updated thoughts on this topic.

Today we’re going to look at an alternative approach to the multi-window VLR technique we examined last month in Virtual List Reporting part 3, utilizing and extending techniques introduced in last month’s JSON + Virtual List. To avoid needless repetition, today’s article will assume the reader is familiar with that material.

Demo files: vlr-plus-json-v1 and vlr-plus-json-v2

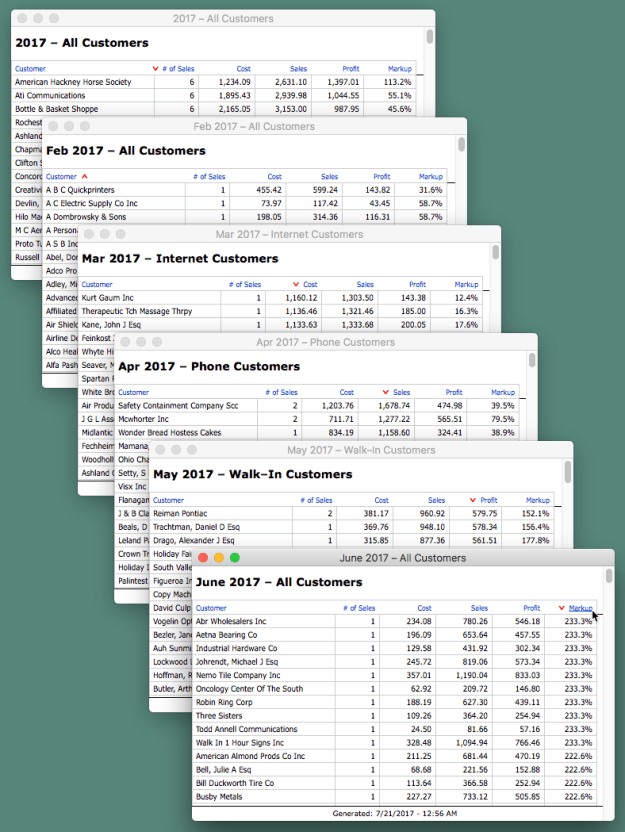

As you may recall from VLR Part 3, we have a system that allows us to spawn as many separate reports as we wish (each in its own browse mode window) and sort each report independently via clickable column headings.

Demo 1 – VLR + JSON, v1

This is based on the demo we saw in Virtual List Reporting, part 3, with this difference: instead of generating hundreds or thousands of individual $$var[reps], each report will generate a single JSON object containing name/value pairs, e.g.,

The demo includes three options re: JSON formatting…

…and here’s why: if you’re going to build a large block of JSON, when (and whether) you format it can make a big difference re: total time to generate (and render).

The JSON object is generated via a loop that adds a new “element” (set of name/value pairs) with each iteration, and at first I was planning on simply going with the “For Each Iteration” option, i.e., adding a new element to the JSON object like so:

…but it turns out to be faster to generate “raw” JSON…

…and only format it prior to display, and even faster to dispense with formatting altogether… in which case it will be a little rough on human eyes, but perfectly parse-able as far as reporting is concerned.

How much faster? Using July 2017 and Type = “All” as the baseline, and restarting FileMaker between each test to rule out any effects of caching, here are some timings (FMPA 16v2 running locally on my aging Windows laptop):

Hmmm… even the best time is pretty sluggish isn’t it? And note that decode time is significantly longer than encode time. This raises two questions:

#1. How large is the JSON object? Formatted is 93 Kb; unformatted is 62 Kb.

#2.What is happening during decoding? The virtual list table a ) looks like this…

…and b ) parses the JSON like so:

Explanation

1. For each row and column (a.k.a. “cell”) in the virtual list able, assign coordinates based on row ID and repetition number.

2. Use those coordinates to reach into the JSON object and extract the value (a.k.a. “element”) corresponding to those coordinates.

So, it would appear that the larger the JSON object, the slower the decode time. And note that the report includes a sort on an unstored calculation, and that time to sort significantly impacts rendering time.

Can we do anything to improve performance? Indeed we can, which brings us to…

Demo 2 – VLR + JSON, v2

Demo 2 is identical to demo 1 except… a ) instead of creating one large JSON object, it generates one individual object per customer, and b ) the rendering logic has been modified accordingly.

So, now the JSON objects look like this (formatted)…

…or this (unformatted)…

…and the rendering logic in the virtual list table looks like this:

Does it make a difference in the timings (July 2017, Type = All)?

Affirmative, and quite an improvement over demo 1.

What if we expand the criteria to look at all 2016 entries…

…so roughly 12x more data than the preceding?

Conclusions

1. A bunch of small JSON objects will encode and decode significantly faster than one large equivalent object.

2. Applying JSONFormatElements to a small JSON object will not cause a discernible performance hit.

3. However, applying JSONFormatElements to a large-ish JSON object will cause a discernible performance hit.

Every FileMaker JSON function scans the entire input object to validate that it is in fact valid JSON. This incurs a computational cost roughly linear with respect to the size of the JSON input. This is why JSONFormatElements takes less time on smaller inputs than larger inputs, and why parsing all the data out of a series of smaller objects is faster than parsing the same data from a larger object. When reading all the data from a large object with nested structures, folks should expect to get better performance parsing the data by parsing the nested structures into new variables first, then parsing details from the new, smaller variable, rather than parsing everything directly from the original large object.

Thanks Jeremy.

Nice post! Always good to get performance metrics on alternative methods.

I may be wrong, so please let me know if I’m missing something here, but I’n not seeing a good justification to use JSON to drive a virtual list. It seems to take more work to create and is then slower to load.

Am I missing something?

Hi Karstyn,

That’s a valid question. I suspect a growing number of people will be using JSON in conjunction with virtual list, so it’s probably a good idea to have some familiarity with a ) implementation details, and b ) pros and cons. Demo #2 is pretty fast, but I haven’t (yet) bench marked it against a couple other VLR approaches I have in mind.

Stay tuned.

Kevin